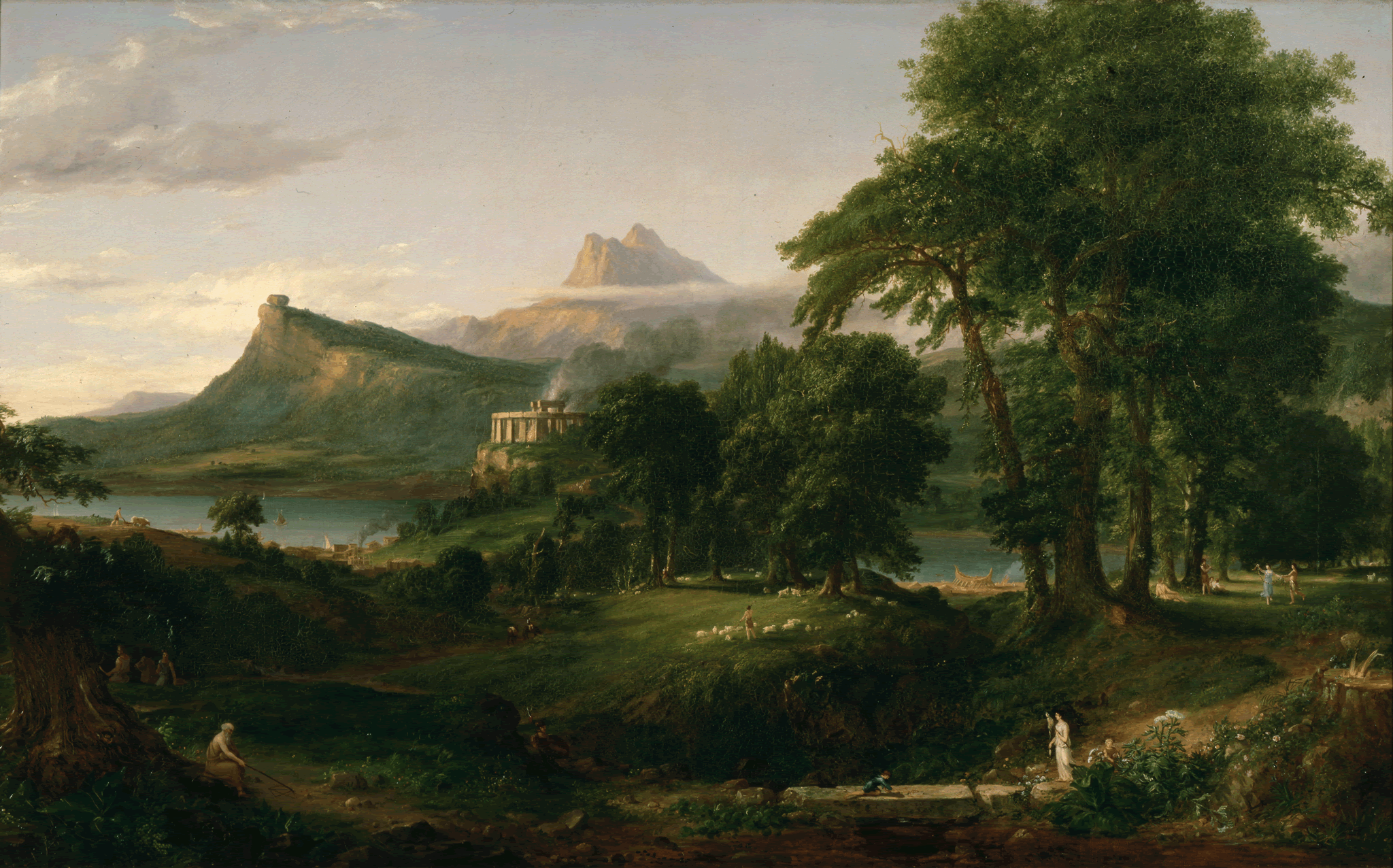

Vision

Visions of an Agentic World

by Aarik — March 2026

Agent — a person who acts on behalf of another person or group

Agentic — able to accomplish results with autonomy, used especially in reference to artificial intelligence

Agency — the ability to make decisions and act independently

I've had 1,821 conversations with ChatGPT over three years. Thirty thousand messages. And for most of that time, every single one started from zero. The model would engage brilliantly in the moment, reasoning through a trade setup, stress testing a business problem, and helping me think through various life decisions. But it never held any of it. Some light pattern recognition across sessions. Some accumulated understanding of how I think, but never a sense that we'd been here before. The conversation felt hollow, not because the model was bad, but because the interaction had no memory of me. What I got was a very capable stranger, with a very good dossier on me.

I don't think this is a context window problem. Dumping 30,000 messages into a prompt or a curated selection of facts from your life is not memory. The model retrieves, but it doesn't understand. There's a difference. I started trying to articulate that difference, and it led me somewhere I didn't expect: epistemology, a fancy word for the theory of knowledge: how do you know what you know, and what was the process?

The Missing Primitive

The missing primitive isn't storage. It's epistemology: the theory of how knowledge is formed, evaluated, and acted upon. Until that's built into the foundation of personal AI systems, everything else is mimicry.

Loading facts into context isn't the same as knowing someone. Memory, human memory, requires belief, evidence, confidence, and the ability to revise what you know. It requires knowing not just what someone has said, but what they mean by it, how certain they are, and when that certainty should change.

AI research, computer science, cognitive biology, and philosophy have developed deep bodies of work on all of this individually, but they are almost entirely disconnected from how memory layers are actually built in AI systems today. The dominant approach is: store the text, retrieve the text, inject the text. There is no representation of confidence. No mechanism for revision that doesn't just overwrite. No distinction between something someone said once and something that defines how they operate across three years of decisions. Knowledge has no lifecycle, belief has no structure.

The result is a system that can repeat back what you told it but cannot reason about what it means, how reliable it is, or how it relates to everything else it knows about you. Again, a list of facts does not define who someone is.

Ownership Is Not Optional

Lock-in of memory violates an individual's agency.

The first place this matters is ownership. Every interaction I have with an AI is generating something valuable: a model of how I think, what I prioritize, how I reason under pressure, and where my blind spots are. That model is being built whether or not I can see it, correct it, or take it with me when I leave. The platforms that build it (ChatGPT memory, Claude Projects) do it opaquely. I can't inspect the representation. I can't directly correct what's wrong with it. I can't export it. I can't use it with a different provider. That's not a design quirk, it's a structural choice. The memory of you is an asset, and right now it belongs to the platform.

I think this is wrong in a way that will only become more consequential over time. If the way I reason, the context I carry, and the trajectory of my thinking are locked inside a provider's system, then my intelligence is not portable. And intelligence that is not portable is not mine.

The individual must own their AI interactions, not as a policy position, but as a technical requirement. All interactions, derived knowledge, and accumulated understanding should belong to the person and be accessible only on their terms. This isn't about privacy in the conventional sense. It's about who controls the cognitive layer of your life.

I believe this will only become more important as we see the continued commodification of language models.

Agents Act on Your Behalf, But They Need to Know You

An agent that can write code, browse the web, manage a calendar, execute financial transactions, an agent with real autonomy, will make thousands of small decisions that require implicit understanding of your priorities, your constraints, your risk tolerance, your values. Those decisions don't come with a prompt. They require inference from a model of who you are.

The conversation about AI agents has been almost entirely about capability. What can the agent do? How many tools can it use? How autonomously can it plan and execute? The question that has received almost no serious attention is: Does the agent know whom it's working for?

Without that model, the agent is powerful in the generic sense and unreliable in the specific sense. It will optimize for an average user in the absence of better information. It will make reasonable-sounding decisions that are wrong for you, not because it failed, but because it was never equipped to succeed.

In an agentic world, misalignment doesn't produce a bad answer. It produces a wrong action. The stakes are categorically different.

What agents actually need is an identity layer, a compressed, accurate model of the human they're acting on behalf of. Not facts loaded into a context window, but a behavioral model: how this person reasons, where they draw lines, what they prioritize when constraints conflict. The agent consults that model the way a trusted employee consults their understanding of their manager: not for explicit instructions on every decision, but for calibrated judgment across all of them.

Human oversight in this world is contextual, not constant. You can't approve every action. But you can demand that the agent operating on your behalf is working from an accurate model of you, one that is built on you, can be inspected by you, and can be corrected by you.

Agency flows through the human, never away from them.

Centralized Intelligence Is a Structural Risk

Decentralized AI creates resilience. Centralized AI creates dependence. The question is whether we build the right architecture before the wrong one becomes too entrenched to displace.

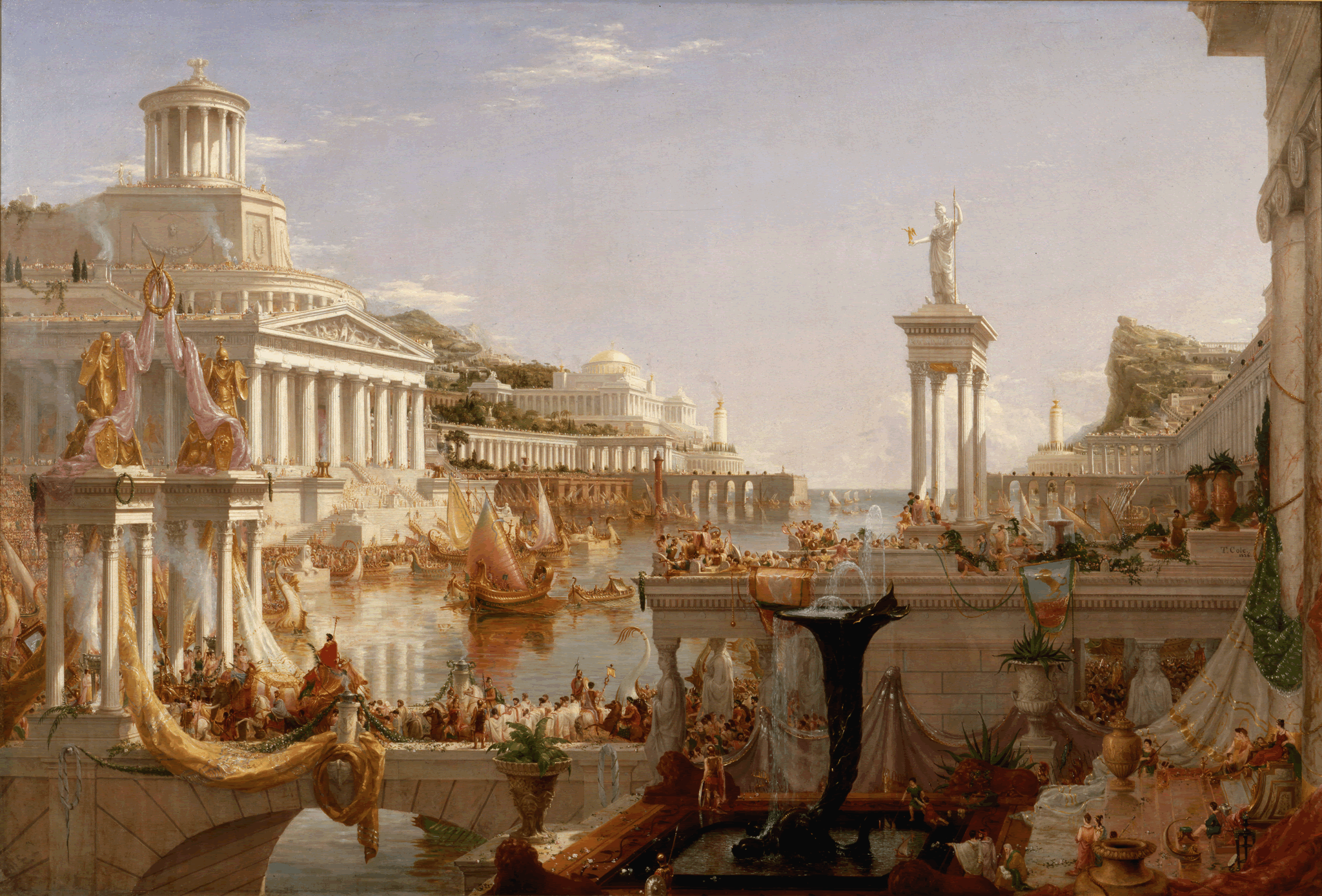

The natural endpoint of the current trajectory is five companies owning the cognitive layer of a billion people. Their systems will know more about those people than the people know about themselves. That knowledge will be used to generate profit. The people who contributed that knowledge, through every conversation, every preference expressed, every vulnerability revealed, will have no visibility into what was extracted, no ability to correct it, and no way out that doesn't cost them their history.

This is not a speculation about malice. It's a structural consequence of centralized intelligence at scale. Centralized intelligence centralizes power. No single entity should own the cognitive layer of society.

The alternative is decentralized ownership of personal AI. Each person's knowledge, memory, and derived understanding lives locally, on their machines, their handheld supercomputers, under their control, portable across any system they choose to use. The intelligence is not centralized; it's federated. Agents can be built on top of it. Institutions can be granted access to it on individual terms. But the substrate belongs to the person.

Same Mind, New Model

There's another dimension to this that I don't think gets enough attention: continuity.

Large Language Models are stateless by design. Every version is a new model: different weights, different training, different capabilities. The model you used two years ago is not the model you use today. The model you use today will not be the model you use in two years.

If your memory lives inside the model, or worse, is just implicitly encoded in the provider's fine-tuning of it, then every model upgrade is a discontinuity. Your history, your accumulated understanding, the trajectory of how you've been thinking All of it goes through an opaque transformation that you can't inspect or verify.

The right architecture separates the memory from the model entirely. Memory lives in a system around the model. The model can change (upgrade, swap providers, move from cloud to local) and the memory must persist. The understanding of who you are doesn't reset when the substrate changes. Same mind, new model.

This requires that identity be treated as a process, not a parameter. You are not a fixed point. You change. Your views evolve, your priorities shift, your understanding deepens. A real identity layer tracks that evolution: not overwriting what you believed before, but linking it to what you believe now, preserving the trajectory. Belief revision, not belief replacement.

Model upgrades must preserve belief trajectories. Intelligence must persist as substrates change. This is technically achievable. It's architecturally not a priority for the systems being built today. That's a choice we can change.

Toward a Digital Twin of Humanity

The result is not a replacement of human judgment. It's an extension of it. Each individual's agency, values, and constraints are encoded into their digital representation. When two agents interact, they're not two autonomous systems optimizing independently; they're two human perspectives, mediated efficiently, with the humans able to inspect and override any decision that matters.

Every person has a persistent digital counterpart: a compressed, portable, sovereign representation of how they think, what they value, and how they operate. That counterpart travels with them across systems, agents, and providers. It evolves as they evolve.

Agents carry that counterpart into every interaction. They negotiate, coordinate, and execute on behalf of the human; asynchronously, across systems, at scales no individual could manage manually. Your agent schedules the meeting. Your agent evaluates the contract. Your agent coordinates with another agent representing a counterparty, each operating from an accurate model of the human they represent.

This is not the metaverse. It's not a simulation. It's a coordination layer for human agency at scale.

The collective intelligence that emerges from this, millions of agents carrying accurate models of the humans they represent, coordinating across institutions and borders, is not centralized anywhere. It's emergent from the aggregate of individual sovereignty. No single system owns it. No single actor can corrupt it. The intelligence is distributed because the ownership is distributed.

Human Agency Is the Constant

What ties this together is not the technology. The technology is means, not ends.

The constant is human agency, the principle that individuals should be the authors of their own cognitive lives, not the raw material from which others build products.

Ownership protects agency by ensuring that the cognitive layer belongs to the individual.

Epistemology grounds agency by ensuring that the model of you is accurate, revisable, and auditable, not a black box inference built for someone else's purposes.

Agents extend agency by giving individuals the capacity to act at scales and speeds that weren't previously possible, without surrendering the values and constraints that define who they are.

Decentralization preserves agency by ensuring that no single entity can accumulate the cognitive layer of society and use it as leverage.

Continuity sustains agency by ensuring that intelligence persists as substrates change, that the individual's accumulated understanding isn't erased every time a model upgrades.

Digital twins amplify agency by creating a durable, portable representation of the individual that can operate in distributed systems without the individual being present for every transaction.

The agentic world is coming. The question is whether it's built on human agency or on the extraction of it. I think the answer is worth building for.

Base Layer is the first layer of this stack. A compressed, auditable, locally-owned behavioral model, readable by any agent, portable across any provider, owned by the individual. The identity layer that the agentic world requires.

The reason I am interested in building something that can compress my identity into a machine-shareable form stemmed from a number of serendipitous conversations with myself and others that forced me to consider what my worldview is for an agentic future. That worldview is what I want to work towards. Things like where AI is headed, how we ensure it benefits society, and what primitives and frameworks need to be in place for it to happen.

I have a recurring fear that I am always being pseudo-intellectual, so sharing this makes me feel quite vulnerable. I hope you enjoyed it.